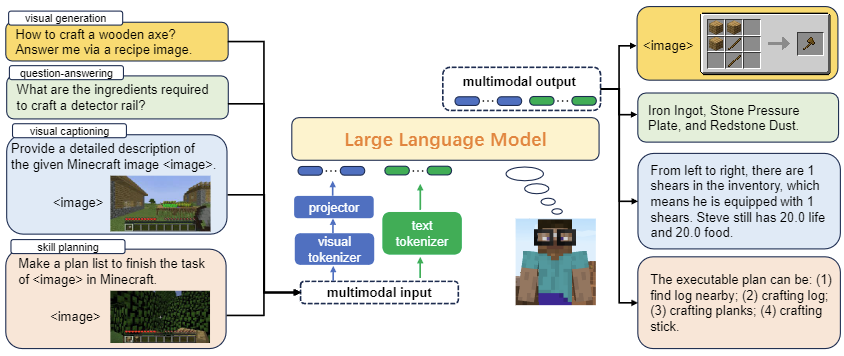

Steve-Eye: Equipping LLM-based Embodied Agents with Visual Perception in Open Worlds

Published in Proceedings of the International Conference on Learning Representations (ICLR), 2024

Recommended citation: S Zheng, J Liu, Y Feng, Z Lu. "Steve-Eye: Equipping LLM-based Embodied Agents with Visual Perception in Open Worlds." 2023 arXiv preprint. arXiv:2310.13255. https://arxiv.org/abs/2310.13255

Recent studies have presented compelling evidence that large language models (LLMs) can equip embodied agents with the self-driven capability to interact with the world, which marks an initial step toward versatile robotics. However, these efforts tend to overlook the visual richness of open worlds, rendering the entire interactive process akin to “a blindfolded text-based game.” Consequently, LLM-based agents frequently encounter challenges in intuitively comprehending their surroundings and producing responses that are easy to understand. In this paper, we propose Steve-Eye, an end-to-end trained large multimodal model designed to address this limitation. Steve-Eye integrates the LLM with a visual encoder which enables it to process visual-text inputs and generate multimodal feedback. In addition, we use a semi-automatic strategy to collect an extensive dataset comprising 850K open-world instruction pairs, empowering our model to encompass three essential functions for an agent: multimodal perception, foundational knowledge base, and skill prediction and planning. Lastly, we develop three open-world evaluation benchmarks, then carry out extensive experiments from a wide range of perspectives to validate our model’s capability to strategically act and plan. Codes and datasets will be released.