UniCode: Learning a Unified Codebook for Multimodal Large Language Models

Published in arXiv preprint, 2024

Recommended citation: S Zheng, B Zhou, Y Feng, Y Wang, Z Lu. "UniCode: Learning a Unified Codebook for Multimodal Large Language Models" 2024 arXiv preprint. arXiv:2403.09072. https://arxiv.org/abs/2403.09072

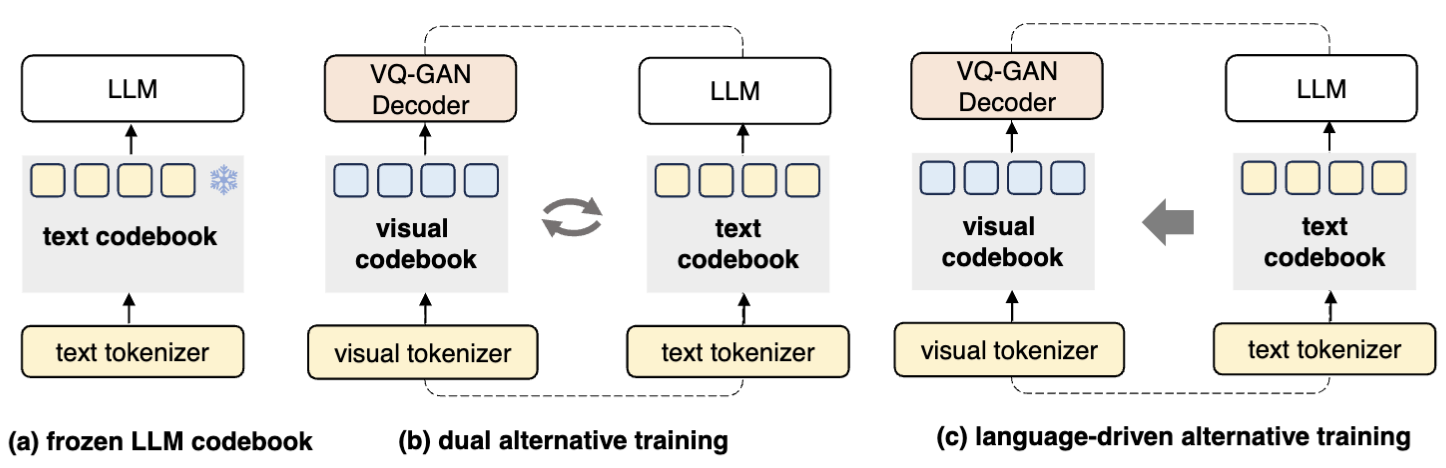

In this paper, we propose \textbf{UniCode}, a novel approach within the domain of multimodal large language models (MLLMs) that learns a unified codebook to efficiently tokenize visual, text, and potentially other types of signals. This innovation addresses a critical limitation in existing MLLMs: their reliance on a text-only codebook, which restricts MLLM’s ability to generate images and texts in a multimodal context. Towards this end, we propose a language-driven iterative training paradigm, coupled with an in-context pre-training task we term ``image decompression’’, enabling our model to interpret compressed visual data and generate high-quality images. The unified codebook empowers our model to extend visual instruction tuning to non-linguistic generation tasks. Moreover, UniCode is adaptable to diverse stacked quantization approaches in order to compress visual signals into a more compact token representation. Despite using significantly fewer parameters and less data during training, Unicode demonstrates promising capabilities in visual reconstruction and generation. It also achieves performances comparable to leading MLLMs across a spectrum of VQA benchmarks.